Monorepo vs Polyrepo: What the PR benchmark data actually shows

Benchmark data from 320 teams comparing monorepo and polyrepo PR cycle times. What “good” looks like and why developer infrastructure matters, especially for AI agents.

The first benchmark data comparing monorepo vs polyrepo PR cycle times

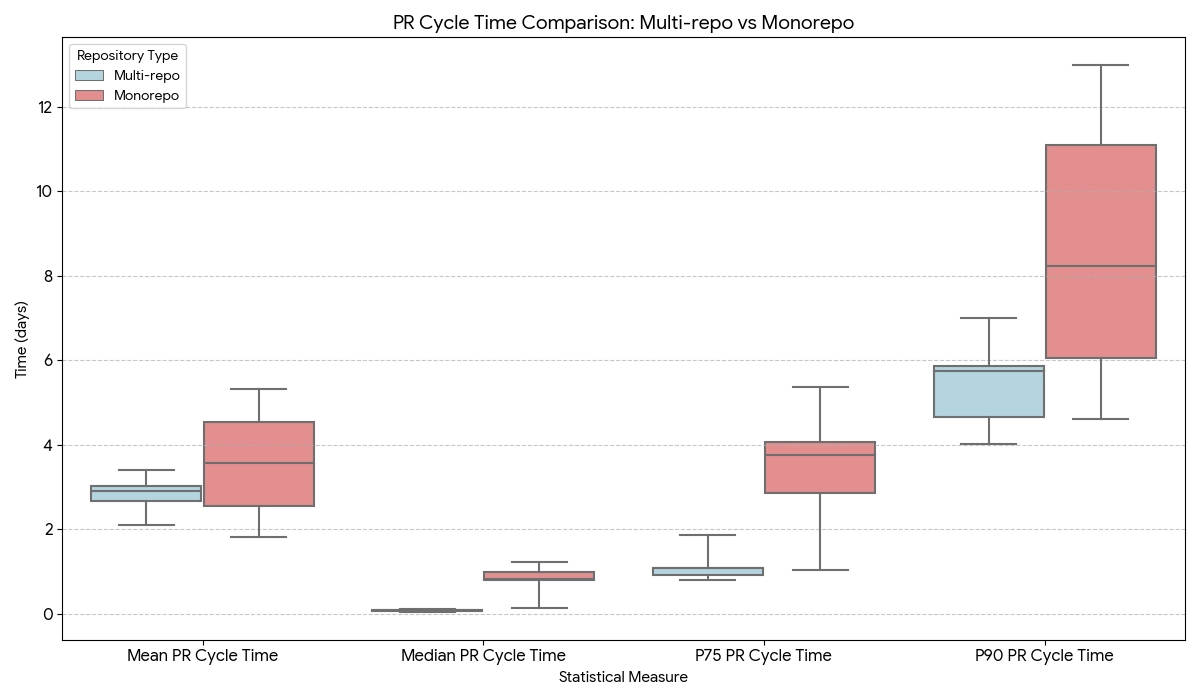

Here's the bottom line: PR cycle times in monorepos tend to look very different from those in polyrepo environments, and most industry benchmarks blur that distinction.

We analyzed 320 scrum teams over a full year and found that median PR cycle time in monorepos was 19 hours compared to 2 hours in polyrepos (multi-repos). That’s a meaningful gap—but it doesn’t necessarily mean monorepos are inherently slower.

In principle, well-built monorepo infrastructure can achieve comparable performance. In practice, many teams struggle to keep developer tooling, CI systems, and ownership models aligned as repositories grow, which shows up as longer and more variable PR cycle times.

This matters now more than ever as AI coding agents enter the software development workflow. The same properties that slow humans down in monorepos may actually help AI agents operate more effectively, by making it easier for them to reason about dependencies and apply cross-cutting changes.

Organizations that understand and optimize monorepo flow today are effectively preparing their codebases for the agentic future.

Monorepo vs polyrepo: PR cycle time benchmarks

At Faros, we analyze software delivery data across thousands of engineering teams to understand how work actually flows through modern development systems. One of the common questions we hear is: "We develop in a monorepo. All the benchmarks out there are generic. What does good PR cycle time actually look like for us?"

It's a fair question. Most industry benchmarks blend together very different repo strategies, masking the real trade-offs teams are making. To explore this question, we analyzed pull request flow across 320 engineering teams over a one-year period, comparing teams primarily working in monorepos with those using polyrepos (multi-repository architectures).

Monorepos are not just "large repos." They represent a fundamentally different coordination model:

- Changes often span multiple ownership domains

- Reviews involve more stakeholders

- CI has a broader surface area

- The cost of coordination is higher, but so is the leverage of each change

This is probably the first analysis ever published with actual data points comparing monorepo vs polyrepo performance at scale.

{{engprod-handbook}}

How we measure PR cycle time

At Faros, we define PR cycle time as the elapsed time from when a pull request exits draft state and is ready for review, to when it is merged into the mainline branch.

This definition intentionally excludes time spent coding before review or iterating in draft. Once a PR is ready for review, cycle time reflects system-level flow: reviewer availability, CI latency, ownership boundaries, and prioritization.

That makes it a particularly useful lens for comparing repo strategies. For a deeper dive into this metric, see our guide on lead time for software delivery.

The data: monorepo vs polyrepo benchmarks

Across the teams analyzed, the following patterns emerged:

At the median, pull requests in monorepos take longer to merge than those in polyrepo environments. However, averages alone do not capture the full story.

The most important difference between the two models appears in the shape of the distribution, particularly in the upper percentiles.

Why the distribution matters

Two patterns become clear when looking beyond averages.

The median gap: A typical PR in a monorepo takes 19 hours to merge, compared to just 2 hours in a polyrepo. Monorepo medians are not only higher but also more dispersed, reflecting differences in tooling and operational maturity across teams.

The volatility of the tail: By the 90th percentile (P90), those differences become abundantly clear. While some teams keep worst-case PRs to under 5 days, many blow past the 10 day mark. Polyrepo teams, by contrast, show a much tighter, more predictable range.

The takeaway: Monorepos exhibit greater variability in PR cycle time outcomes. The heavier and more variable tails reflect differences in coordination, tooling, and operational maturity across teams.

In principle, well-engineered monorepo infrastructure can achieve performance comparable to polyrepo environments. The challenge is operational: as repositories grow, the surrounding developer infrastructure—build systems, CI pipelines, and ownership models—must evolve alongside them.

When that infrastructure lags behind repository scale, the result often appears as longer and more variable PR cycle times.

The monorepo maturity curve

Looking across many organizations, monorepo performance often follows a maturity curve.

Early in the lifecycle of a monorepo, teams frequently experience slower and less predictable PR flow. As the repository grows, CI pipelines expand, build times increase, and pull requests begin to cross more ownership boundaries. Without supporting infrastructure, these dynamics can create long feedback loops.

Over time, high-performing organizations invest in systems that maintain fast feedback loops even as the repository scales. These often include incremental build systems, intelligent test selection, automated review routing, and merge queues that manage concurrency safely.

As a result, monorepo performance tends to vary more widely across organizations than polyrepo performance does.

A simplified maturity curve often looks like this:

Where monorepo PRs get stuck

Across organizations, slow monorepo PRs consistently cluster around a few bottleneck categories:

Review topology complexity. Changes often span multiple ownership domains, increasing reviewer count and review latency.

CI surface area. Monorepos trigger larger, more conservative test matrices. Correct, but time-consuming.

Large, cross-cutting changes. Monorepos enable broad refactors. These PRs are high leverage, but expensive to review and risky to merge.

Queueing and priority effects. Shared repos create implicit queues. High-priority work moves quickly; everything else waits.

None of these are accidental. They are the natural consequences of optimizing for shared context. As a result, improving monorepo performance is less about eliminating these forces and more about designing around them.

What does "good" PR cycle time look like for monorepos?

Based on our analysis, here's a reasonable target framework for engineering efficiency in monorepo environments:

The goal isn't perfection, rather predictability. Teams that achieve these targets typically combine several practices:

- Clear code ownership and automated review routing

- Disciplined pull request sizing

- CI pipelines optimized for incremental builds

- Automated merge queues and release pipelines

- Strong observability into build and review bottlenecks

While a deep dive into these architectures is beyond our current scope, monorepo.tools offers a definitive breakdown of modern build systems, and Uber's Developer Experience blog provides a masterclass in managing these dynamics at massive scale.

For teams looking to implement these patterns with data-driven precision, an engineering productivity platform can help identify exactly where your tail latency originates and track improvement over time.

Why monorepo efficiency matters more in an agentic world

Here's what we hypothesize about the future: monorepos may actually be the preferred environment for AI agents tasked with complex, cross-cutting engineering work.

AI agents struggle most in fragmented systems. Context is split across repositories, interfaces are implicit, and dependencies must be inferred. Monorepos invert this problem. They provide a unified code graph, explicit dependency relationships, and the ability to reason about and modify multiple components atomically.

These properties can make certain tasks easier for AI agents, including large-scale refactoring, dependency updates, API migrations, and cross-service consistency improvements. If AI agents increasingly participate in development workflows, the shared context provided by monorepos may actually become an important advantage.

If this hypothesis holds, then improving monorepo efficiency isn't just about developer happiness anymore. It's about future leverage. Organizations that reduce tail latency in PR cycle time, clarify ownership and review semantics, accelerate CI feedback, and improve observability into flow bottlenecks are positioning their codebases as high-throughput substrates for AI-assisted development.

For engineering leaders measuring AI transformation impact, understanding how your monorepo structure affects agent performance will become increasingly critical.

{{engprod-handbook}}

Conclusion

The benchmark data shows that monorepo environments tend to exhibit longer and more variable PR cycle times than polyrepo environments.

However, this should not be interpreted as a fundamental limitation of monorepos themselves.

In principle, well-engineered monorepo infrastructure can support fast and predictable development loops. The challenge is operational: as repositories grow, build systems, CI pipelines, ownership models, and automation must evolve to match that scale.

Organizations that invest in this infrastructure often achieve strong development flow even in very large repositories.

More importantly, as AI agents become central to how code gets written and reviewed, the structural advantages of monorepos may shift from "necessary overhead" to "strategic asset." Teams that understand and optimize how work flows through their repositories today will be better positioned to support the increasingly agentic workflows of the future.

Read the report to uncover what’s holding teams back—and how to fix it fast.

What to measure and why it matters.

And the 5 critical practices that turn data into impact.

- Engineering throughput is up

- Bugs, incidents, and rework are rising faster

- Two years of data from 22,000 developers across 4,000 teams

Tokenmaxxing: Why AI token consumption isn't engineering productivity

Tokenmaxxing—treating AI token consumption as a productivity metric—is repeating the lines-of-code mistake. Data from 22,000 developers points to a better way to measure AI engineering impact.